BrianFagioli writes: Canonical has joined the Rust Foundation as a Gold Member, signaling a deeper investment in the Rust programming language and its role in modern infrastructure. The company already maintains an up-to-date Rust toolchain for Ubuntu and has begun integrating Rust into parts of its stack, citing memory safety and reliability as key drivers. By joining at a higher tier, Canonical is not just adopting Rust but also stepping closer to its governance and long-term direction. The move also highlights ongoing tensions in Rust's ecosystem. While Rust can reduce entire classes of bugs, it often depends heavily on external crates, which can introduce complexity and auditing challenges, especially in enterprise environments. Canonical appears aware of that tradeoff and is positioning itself to influence how the ecosystem evolves, as Rust continues to gain traction across Linux and beyond. "As the publisher of Ubuntu, we understand the critical role systems software plays in modern infrastructure, and we see Rust as one of the most important tools for building it securely and reliably. Joining the Rust Foundation at the Gold level allows us to engage more directly in language and ecosystem governance, while continuing to improve the developer experience for Rust on Ubuntu," said Jon Seager, VP Engineering at Canonical. "Of particular interest to Canonical is the security story behind the Rust package registry, crates.io, and minimizing the number of potentially unknown dependencies required to implement core concerns such as async support, HTTP handling, and cryptography -- especially in regulated environments."

Advancing Open Source AI, NVIDIA Donates Dynamic Resource Allocation Driver for GPUs to Kubernetes Community

Artificial intelligence has rapidly emerged as one of the most critical workloads in modern computing.

For the vast majority of enterprises, this workload runs on Kubernetes, an open source platform that automates the deployment, scaling and management of containerized applications.

To help the global developer community manage high-performance AI infrastructure with greater transparency and efficiency, NVIDIA is donating a critical piece of software — the NVIDIA Dynamic Resource Allocation (DRA) Driver for GPUs — to the Cloud Native Computing Foundation (CNCF), a vendor-neutral organization dedicated to fostering and sustaining the cloud-native ecosystem.

Announced today at KubeCon Europe, CNCF’s flagship conference running this week in Amsterdam, the donation moves the driver from being vendor-governed to offering full community ownership under the Kubernetes project. This open environment encourages a wider circle of experts to contribute ideas, accelerate innovation and help ensure the technology stays aligned with the modern cloud landscape.

“NVIDIA’s deep collaboration with the Kubernetes and CNCF community to upstream the NVIDIA DRA Driver for GPUs marks a major milestone for open source Kubernetes and AI infrastructure,” said Chris Aniszczyk, chief technology officer of CNCF. “By aligning its hardware innovations with upstream Kubernetes and AI conformance efforts, NVIDIA is making high-performance GPU orchestration seamless and accessible to all.”

In addition, in collaboration with the CNCF’s Confidential Containers community, NVIDIA has introduced GPU support for Kata Containers, lightweight virtual machines that act like containers. This extends hardware acceleration into a stronger isolation, separating workloads for increased security and enabling AI workloads to run with enhanced protection so organizations can easily implement confidential computing to safeguard data.

Simplifying AI Infrastructure

Historically, managing the powerful GPUs that fuel AI within data centers required significant effort.

This contribution is designed to make high-performance computing more accessible. Key benefits for developers include:

- Improved Efficiency: The driver allows for smarter sharing of GPU resources, delivering effective use of computing power, with support of NVIDIA Multi-Process Service and NVIDIA Multi-Instance GPU technologies.

- Massive Scale: It provides native support for connecting systems together, including with NVIDIA Multi-Node NVlink interconnect technology. This is essential for training massive AI models on NVIDIA Grace Blackwell systems and next-generation AI infrastructure.

- Flexibility: Developers can dynamically reconfigure their hardware to suit their needs, changing how resources are allocated on the fly.

- Precision: The software supports fine-tuned requests, allowing users to ask for the specific computing power, memory settings or interconnect arrangement needed for their applications.

A Collaborative, Industry-Wide Effort

NVIDIA is collaborating with industry leaders — including Amazon Web Services, Broadcom, Canonical, Google Cloud, Microsoft, Nutanix, Red Hat and SUSE — to drive these features forward for the benefit of the entire cloud-native ecosystem.

“Open source will be at the core of every successful enterprise AI strategy, bringing standardization to the high-performance infrastructure components that fuel production AI workloads,” said Chris Wright, chief technology officer and senior vice president of global engineering at Red Hat. “NVIDIA’s donation of the NVIDIA DRA Driver for GPUs helps to cement the role of open source in AI’s evolution, and we look forward to collaborating with NVIDIA and the broader community within the Kubernetes ecosystem.”

“Open source software and the communities that sustain it are a cornerstone of the infrastructure used for scientific computing and research,” said Ricardo Rocha, lead of platforms infrastructure at CERN. “For organizations like CERN, where efficiently analyzing petabytes of data is essential to discovery, community-driven innovation helps accelerate the pace of science. NVIDIA’s donation of the DRA Driver strengthens the ecosystem researchers rely on to process data across both traditional scientific computing and emerging machine learning workloads.”

Expanding the Open Source Horizon

This donation is just part of NVIDIA’s broader initiatives to support the open source community. For example, NVSentinel — a system for GPU fault remediation — and AI Cluster Runtime, an agentic AI framework, were announced at GTC last week.

In addition, NVIDIA announced at GTC new open source projects including the NVIDIA NemoClaw reference stack and NVIDIA OpenShell runtime for securely running autonomous agents. OpenShell provides fine-grained programmable policy security and privacy controls, and natively integrates with Linux, eBPF and Kubernetes.

NVIDIA also today announced that its high-performance AI workload scheduler, the KAI Scheduler, has been onboarded as a CNCF Sandbox project — a key step toward fostering broader collaboration and ensuring the technology evolves alongside the needs of the wider cloud-native ecosystem. Developers and organizations can use and contribute to the KAI Scheduler today.

NVIDIA remains committed to actively maintaining and contributing to Kubernetes and CNCF projects to help meet the rigorous demands of enterprise AI customers.

In addition, following the release of NVIDIA Dynamo 1.0, NVIDIA is expanding the Dynamo ecosystem with Grove, an open source Kubernetes application programming interface for orchestrating AI workloads on GPU clusters. Grove, which enables developers to express complex inference systems in a single declarative resource, is being integrated with the llm-d inference stack for wider adoption in the Kubernetes community.

Developers and organizations can begin using and contributing to the NVIDIA DRA Driver today.

Visit the NVIDIA booth at KubeCon to see live demos of this technology in action.

Artificial intelligence holds promise for helping doctors diagnose patients and personalize treatment options. However, an international group of scientists led by MIT cautions that AI systems, as currently designed, carry the risk of steering doctors in the wrong direction because they may overconfidently make incorrect decisions.

One way to prevent these mistakes is to program AI systems to be more “humble,” according to the researchers. Such systems would reveal when they are not confident in their diagnoses or recommendations and would encourage users to gather additional information when the diagnosis is uncertain.

“We’re now using AI as an oracle, but we can use AI as a coach. We could use AI as a true co-pilot. That would not only increase our ability to retrieve information but increase our agency to be able to connect the dots,” says Leo Anthony Celi, a senior research scientist at MIT’s Institute for Medical Engineering and Science, a physician at Beth Israel Deaconess Medical Center, and an associate professor at Harvard Medical School.

Celi and his colleagues have created a framework that they say can guide AI developers in designing systems that display curiosity and humility. This new approach could allow doctors and AI systems to work as partners, the researchers say, and help prevent AI from exerting too much influence over doctors’ decisions.

Celi is the senior author of the study, which appears today in BMJ Health and Care Informatics. The paper’s lead author is Sebastián Andrés Cajas Ordoñez, a researcher at MIT Critical Data, a global consortium led by the Laboratory for Computational Physiology within the MIT Institute for Medical Engineering and Science.

Instilling human values

Overconfident AI systems can lead to errors in medical settings, according to the MIT team. Previous studies have found that ICU physicians defer to AI systems that they perceive as reliable even when their own intuition goes against the AI suggestion. Physicians and patients alike are more likely to accept incorrect AI recommendations when they are perceived as authoritative.

In place of systems that offer overconfident but potentially incorrect advice, health care facilities should have access to AI systems that work more collaboratively with clinicians, the researchers say.

“We are trying to include humans in these human-AI systems, so that we are facilitating humans to collectively reflect and reimagine, instead of having isolated AI agents that do everything. We want humans to become more creative through the usage of AI,” Cajas Ordoñez says.

To create such a system, the consortium designed a framework that includes several computational modules that can be incorporated into existing AI systems. The first of these modules requires an AI model to evaluate its own certainty when making diagnostic predictions. Developed by consortium members Janan Arslan and Kurt Benke of the University of Melbourne, the Epistemic Virtue Score acts as a self-awareness check, ensuring the system’s confidence is appropriately tempered by the inherent uncertainty and complexity of each clinical scenario.

With that self-awareness in place, the model can tailor its response to the situation. If the system detects that its confidence exceeds what the available evidence supports, it can pause and flag the mismatch, requesting specific tests or history that would resolve the uncertainty, or recommending specialist consultation. The goal is an AI that not only provides answers but also signals when those answers should be treated with caution.

“It’s like having a co-pilot that would tell you that you need to seek a fresh pair of eyes to be able to understand this complex patient better,” Celi says.

Celi and his colleagues have previously developed large-scale databases that can be used to train AI systems, including the Medical Information Mart for Intensive Care (MIMIC) database from Beth Israel Deaconess Medical Center. His team is now working on implementing the new framework into AI systems based on MIMIC and introducing it to clinicians in the Beth Israel Lahey Health system.

This approach could also be implemented in AI systems that are used to analyze X-ray images or to determine the best treatment options for patients in the emergency room, among others, the researchers say.

Toward more inclusive AI

This study is part of a larger effort by Celi and his colleagues to create AI systems that are designed by and for the people who are ultimately going to be most impacted by these tools. Many AI models, such as MIMIC, are trained on publicly available data from the United States, which can lead to the introduction of biases toward a certain way of thinking about medical issues, and exclusion of others.

Bringing in more viewpoints is critical to overcoming these potential biases, says Celi, emphasizing that each member of the global consortium brings a distinct perspective to a broader, collective understanding.

Another problem with existing AI systems used for diagnostics is that they are usually trained on electronic health records, which weren’t originally intended for that purpose. This means that the data lack much of the context that would be useful in making diagnoses and treatment recommendations. Additionally, many patients never get included in those datasets because of lack of access, such as people who live in rural areas.

At data workshops hosted by MIT Critical Data, groups of data scientists, health care professionals, social scientists, patients, and others work together on designing new AI systems. Before beginning, everyone is prompted to think about whether the data they’re using captures all the drivers of whatever they aim to predict, ensuring they don’t inadvertently encode existing structural inequities into their models.

“We make them question the dataset. Are they confident about their training data and validation data? Do they think that there are patients that were excluded, unintentionally or intentionally, and how will that affect the model itself?” he says. “Of course, we cannot stop or even delay the development of AI, not just in health care, but in every sector. But, we must be more deliberate and thoughtful in how we do this.”

The research was funded by the Boston-Korea Innovative Research Project through the Korea Health Industry Development Institute.

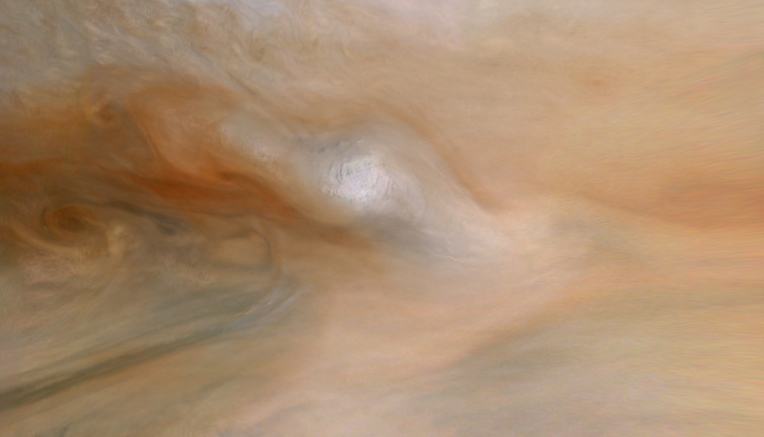

Jupiter's colossal storms generate lightning flashes at least 100 times more powerful than those on Earth, according to scientists analyzing data from NASA's Juno spacecraft.

The findings were published March 20 in the journal AGU Advances and were based on data recorded by Juno in 2021 and 2022, after NASA granted an extension to the spacecraft's operations upon completing a five-year science campaign at Jupiter. Juno remains in good health, but NASA officials have not said if they will approve another extension for the mission. The issue is money.

Questions about the future of Juno and more than a dozen other robotic science missions began swirling nearly a year ago, when the Trump administration asked mission leaders to submit "closeout" plans for how to turn off their spacecraft. Ars first reported the news soon after the White House released a budget request that called for slashing NASA's science budget by nearly half.

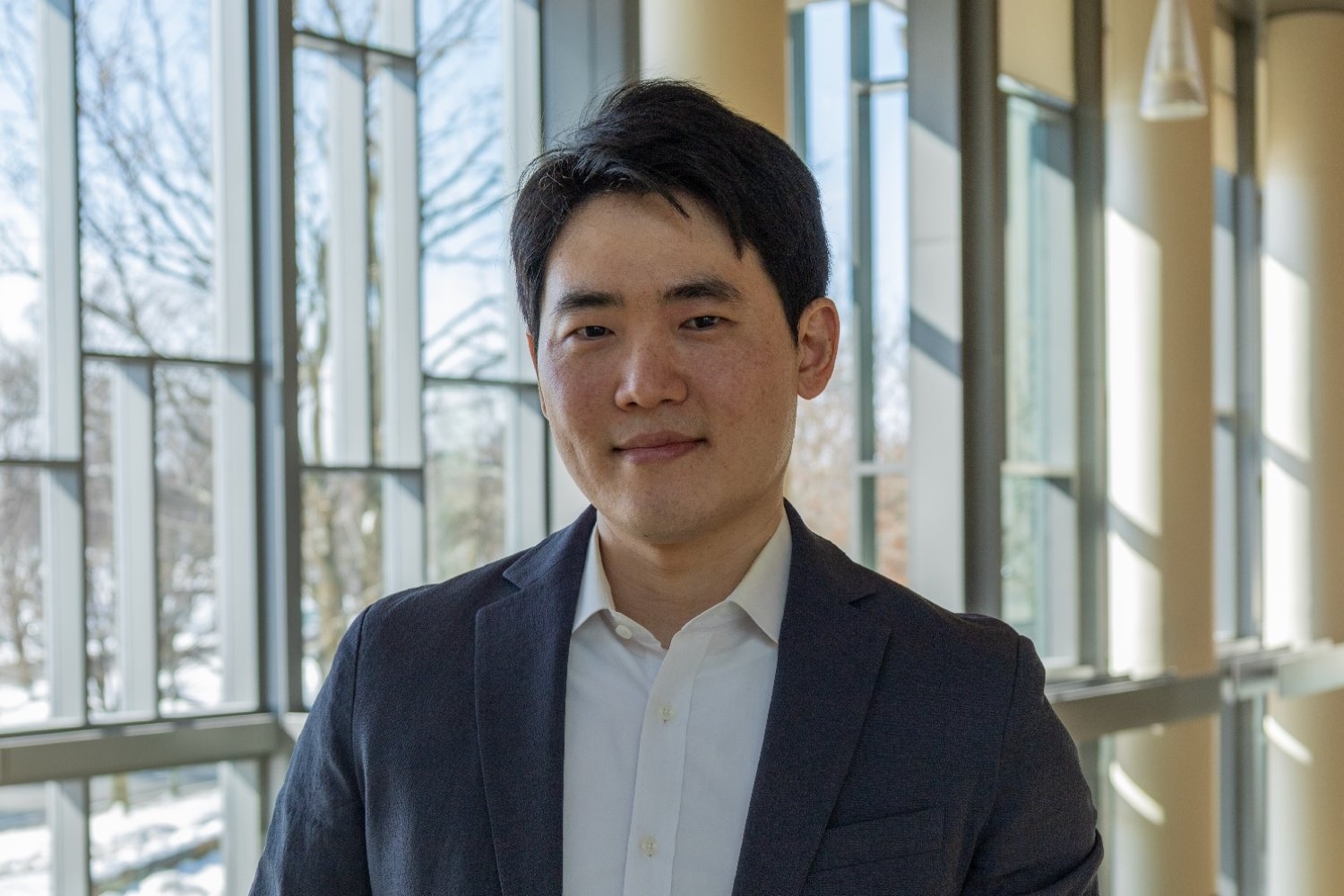

The sense of support and community was palpable when Sojun Park, a postdoc at the MIT Center for International Studies (CIS), delivered a recent presentation on The Global Diffusion of AI Technologies and Its Political Drivers. The event, part of the CIS Global Research and Policy Seminar, filled the venue with audience members from across MIT.

“My work is directly connected to what CIS faculty have previously done on international trade and security,” Park said afterwards. “If I hadn’t received a postdoctoral fellowship and come to MIT, I wouldn’t have been able to think through the security implications of my intellectual property research. I’ve been tremendously motivated by these scholars.”

Park’s time at CIS has been both grounding and transformative, offering him a scholarly home that has shaped his research and helped broaden his intellectual horizons.

Pursuing interdisciplinary research and connections

Before pursuing a tenure-track position, Park set his sights on conducting research at MIT. When he came across a public posting about the CIS Postdoctoral Associate Program, he took a chance and applied.

“My own research is interdisciplinary, and I knew that I could really benefit from the interdisciplinary environment at MIT, and specifically at CIS, where faculty are coming not only from political science, but also affiliated with the Department of Economics and MIT Sloan [School of Management],” he says.

Park was thrilled to receive the paid fellowship, which offers an academic year at MIT and dedicated office space at CIS. At MIT, he is free to use his time toward his own research, and has found value in pursuing topics that are of interest to the CIS community — whether it’s AI or global governance. He’s published prolifically along the way, including two articles in the Review of International Organizations and the Review of International Political Economy.

He’s also continued to work on his forthcoming book, “From Privilege to Prosperity: Knowledge Diffusion and the Global Governance of Intellectual Property,” which examines how technologies can be transferred legitimately across borders. “By 'legitimately,' I am asking under what circumstances would firms volunteer to share their technologies? I’m interested in institutions and institutional environments that allow large businesses to share their technologies with smaller businesses based in the development world that may not possess the ability to come up with their own technologies,” he explains.

During the spring 2026 semester, he is collaborating with the center’s Undergraduate Fellows Program. This program enables postdocs to work on their research projects with MIT undergraduates. Park is working with two CIS undergraduate fellows to develop a new dataset examining international trade in green technologies. This opportunity reconnects Park to his early academic experiences in South Korea that set him on the path to MIT.

Path to MIT

“Students in South Korea are trained to be problem-solvers,” explains Park, who was born and raised in Seoul. The country’s rigorous college entrance exams reward those who can answer the most questions quickly and accurately in a limited amount of time.

While taking a test in high school, Park stumbled over a question that he couldn’t answer, regardless of how much time he spent concentrating on it. He handed in the exam, but took the problem home and spent hours puzzling over it — he just couldn’t let it go. “In hindsight, I see this as the moment I decided that I wanted to become a scholar,” Park says.

While majoring in international studies and economics (statistics) at Korea University, he had the opportunity to participate in a semester-long exchange program at the University of Texas at Austin. There, Park enrolled in a political science course on game theory that explored how individual state actors’ decisions influenced one another’s choices and outcomes in trade, conflict, and diplomacy. The instructor used the ongoing war between North and South Korea as a case study, demonstrating the unique circumstances for escalation or de-escalation depending upon how the key actors made choices along the way.

“I saw for the first time how quantitative methods could be applied to international relations and political economy,” Park says — and he knew that his next step was going to be graduate work in the United States. He began a joint MA and PhD program in political science at Princeton University the following year, supported by a Fulbright Fellowship.

Park’s 2025 dissertation examined the global governance of intellectual property rights — and it was timely. He began his PhD program in 2018, “the point at which the U.S. and China trade war had just begun.” During the pandemic, he was moved by the ongoing debates regarding vaccine inequality. “I realized then that intellectual property was at the center of these global economic challenges.” With little political science research on the topic, he “set out to create a systemic framework” to study it.

Simultaneously, he served as a teaching assistant in undergraduate courses in statistical analysis and realized that he deeply enjoyed the experience of teaching and interacting with students. It was a very different experience from his own college years.

“In South Korea, it’s common for the learning environment to be one in which the professor just delivers lectures, but I found that in the United States’ higher education system, the classroom is truly interactive. I learned something from each of my students.” Soon, Park was certain that he not only wanted to build a career in academic research, but also a future that heavily incorporated teaching and mentoring students.

Before graduating, he spent a year at Georgetown University as a predoctoral fellow affiliated with the Mortara Center for International Studies. This experience enabled him to explore the policy implications of his research and engage with policymakers in Washington — skills he will draw on in his new position.

Lasting lessons from CIS

Park recently accepted a position as assistant professor at the National University of Singapore. Beginning fall 2026, he will be teaching graduate students affiliated with the school of public policy — most of whom will have career experience as practitioners in the public or private sectors.

He’ll take many lessons from MIT to his new academic home, he says. “Based on what I learned in the United States, I’ll make the learning environment in the graduate courses I teach much more interactive and collaborative.”

At CIS, Mihaela Papa, director of research and principal research scientist, and Evan Lieberman, the center’s director and professor of political science, connected Park to associated faculty whose research interests were related with his own. “Meeting with all of these scholars whose research relates in some way to intellectual property rights made me think about how my own interests can expand to other topics,” Park explains.

But the biggest takeaway of all is that he learned how to share his own research with scholars who study unfamiliar topics, to exchange ideas and discover commonality. “I’ll never stop using the communication skills that I got here at MIT," Park says.

Wing is expanding its drone delivery service to the San Francisco Bay Area. "The drone delivery startup has been rapidly expanding to metro areas across the US, but is now targeting the tech-friendly Silicon Valley region," reports Engadget. From the report: Going back to its inaugural deliveries, Wing ferried office supplies across Google's Mountain View campus in the Bay Area with its automated drones. It was still a startup out of Google's X, The Moonshot Factory incubator at the time, but early users were already asking for home delivery services, according to Wing. Now, Wing's latest delivery drones can deliver groceries, food, or whatever else fits in a small package weighing up to five pounds in 30 minutes or less to Bay Area residents. Earlier this year, Wing expanded its service to an additional 150 Walmart stores across the U.S. Service began recently in Atlanta and Charlotte, and it's coming soon to Los Angeles, Houston, Cincinnati, St. Louis, Miami and other major U.S. cities to be announced later. "By 2027, Walmart and Wing say they'll have a network of more than 270 drone delivery locations nationwide."

Epoch and the original problem author confirm GPT5.4 Pro solved a Frontier Math Open Problem for the first time

Link to tweet:

Link to problem:

https://epoch.ai/frontiermath/open-problems/ramsey-hypergraphs

Link to benchmark:

Generally, when you hear “water use” and “sustainability,” you expect those words to be followed by some bad news. Humanity’s enduring ability to ignore the math of declining water supplies is almost impressive. But there are cases where actions have successfully reversed our loss of water resources. A new paper in Science by Scott Jasechko of the University of California, Santa Barbara, examines documented cases of groundwater recovery around the world to identify which strategies have worked.

Groundwater is invaluable for many reasons. For one, it’s (usually) cleaner than surface water. It’s also right under your feet and often close enough to the surface that it doesn’t take much energy to pump it up. And there’s loads of it down there, no matter the season. Because of this, humans use a lot of it for drinking water, agriculture, and every other use you can think of.

Unfortunately, in many places, the rate of groundwater use has grown to exceed the rate at which precipitation soaks into the ground to replenish it.

Autonomous agents mark a new inflection point in AI. Systems are no longer limited to generating responses or reasoning through tasks. They can take action: Agents can read files, use tools, write and run code, and execute workflows across enterprise systems, all while expanding their own capabilities.

Application-layer risk grows exponentially when agents continuously improve and evolve. The NVIDIA OpenShell runtime is being built to address this.

Part of NVIDIA Agent Toolkit, OpenShell is an open source, secure-by-design runtime for running autonomous agents such as claws. It works by ensuring each agent runs inside its own sandbox, separating application-layer operations from infrastructure-layer policy enforcement.

This means security policies are out of reach of the agent — they’re applied at the system level. Instead of relying on behavioral prompts, OpenShell enforces constraints on the environment the agent runs in — meaning the agent cannot override policies, or leak credentials or private data, even if compromised.

With OpenShell, enterprises can separate agent behavior, policy definition and policy enforcement. Organizations gain a single, unified policy layer to define and monitor how autonomous systems operate. Coding agents, research assistants and agentic workflows all run under the same runtime policies regardless of host operating system, simplifying compliance and operational oversight.

This is the “browser tab” model applied to agents: Sessions are isolated, resources are controlled and permissions are verified by the runtime before any action takes place.

Securing autonomous systems requires an integrated ecosystem. OpenShell is designed to add privacy and security controls for AI agents. NVIDIA is collaborating with security partners, including Cisco, CrowdStrike, Google Cloud, Microsoft Security and TrendAI, to align runtime policy management and enforcement for agents across the enterprise stack.

OpenShell Provides an Enterprise-Grade Sandbox for Building Personal AI Assistants

NVIDIA NemoClaw is an open source reference stack that simplifies installing OpenClaw always-on assistants with the OpenShell runtime and NVIDIA Nemotron models in a single command.

NemoClaw provides enthusiasts with an open reference for building self-evolving personal AI agents, or claws. Since security needs vary, NemoClaw provides a reference example for policy-based privacy and security guardrails to give users more control over their agents’ behavior and data-handling. Users can customize it for their specific use cases — much like adjusting security preferences for applications on a phone.

NemoClaw includes an example configuration of OpenShell that defines how the agent should interact with systems. NemoClaw uses open source models like NVIDIA Nemotron alongside OpenShell.

This enables self-evolving claws to run more securely in clouds, on premises or on personal computers, including NVIDIA GeForce RTX PCs and laptops or NVIDIA RTX PRO-powered workstations, as well as NVIDIA DGX Station and NVIDIA DGX Spark AI supercomputers.

Both OpenShell and NemoClaw are in early preview. NVIDIA is building in the open with the community and its partners to enable enterprises to scale self-evolving, long-running autonomous agents safely, confidently and in compliance with global security standards.

Get started with NVIDIA OpenShell and launch a ready‑to‑use environment on NVIDIA Brev, or explore the open source project on GitHub.

The Download: animal welfare gets AGI-pilled, and the White House unveils its AI policy

This is today’s edition of The Download, our weekday newsletter that provides a daily dose of what’s going on in the world of technology.

The Bay Area’s animal welfare movement wants to recruit AI

In early February, animal welfare advocates and AI researchers arrived in stocking feet at Mox, a scrappy, shoes-free coworking space in San Francisco. They gathered to discuss a provocative idea: if artificial general intelligence is on the horizon, could it prevent animal suffering?

Some brainstormed using custom agents in advocacy work, while others pitched cultivating meat with AI tools. But the real talk of the event was a flood of funding they expect will soon flow to animal welfare charities, not from individual megadonors, but from AI lab employees.

Some attendees also probed an even more controversial idea: AI may develop the capacity to suffer—and this could constitute a moral catastrophe. Read the full story to find out why their ideas are gaining momentum and sparking controversy.

—Michelle Kim & Grace Huckins

The must-reads

I’ve combed the internet to find you today’s most fun/important/scary/fascinating stories about technology.

1 The White House has unveiled its AI policy blueprint

Trump wants Congress to codify the light-touch framework into law. (Politico)

+ He also wants to block state limits on AI. (WP $)

+ A backlash against the tech has formed within MAGA. (FT $)

+ A war over AI regulation is brewing in the US. (MIT Technology Review)

2 Elon Musk has been found liable for misleading Twitter investors

A jury ruled that he defrauded shareholders ahead of the $44 billion acquisition. (CNBC)

+ But it absolved him of some fraud allegations. (NPR)

3 The Pentagon is adopting Palantir AI as the core US military system

The move locks in long-term use of Palantir’s weapons-targeting tech. (Reuters)

+ The DoD wants it to link up sensors and shooters for combat. (Bloomberg)

+ Palantir is also getting access to sensitive UK financial regulation data. (Guardian)

+ AI is turning the Iran conflict into theater. (MIT Technology Review)

4 Musk plans to build the largest-ever chip factory in Austin

Tesla and SpaceX will jointly run the project. (The Verge)

+ Future AI chips could be built on glass. (MIT Technology Review)

5 OpenAI will show ads to all US users of the free version of ChatGPT

It’s seeking new revenue streams amid skyrocketing computing costs. (Reuters)

+ The company is also building a fully automated researcher. (MIT Technology Review)

+ It plans to double its workforce soon. (FT $)

6 New crypto rules are set to do the Trumps a “big favor”

Particularly the narrow securities definitions. (Guardian)

7 Tencent has added a version of the OpenClaw agent to WeChat

Users of the super app will now be able to use the tool to control their PCs. (SCMP)

8 Reddit is mulling identity verification to vanquish bots

It’s considering “something like” Face ID or Touch ID. (Engadget)

9 People are using AI to find their lost pets

Databases for pet reunifications supported their searches. (WP $)

10 Scientists have narrowed down the hunt for aliens to 45 planets

The closest is just four light-years from Earth. (404 Media)

Quote of the day

“It doesn’t matter how many people you throw at the problem; we are never going to solve the challenges of war without technology like AI.”

—Alex Miller, the US Army’s CTO, tells Wired why he wants AI in every weapon.

One More Thing

A brain implant changed her life. Then it was removed against her will.

Sticking an electrode inside a person’s brain can do more than treat a disease. Take the case of Rita Leggett, an Australian woman whose experimental brain implant changed her sense of agency and self. She told researchers that she “became one” with her device.

She was devastated when, two years later, she was told she had to remove the implant because the company that made it had gone bust.

Her case highlights the need for a new category of legal protection: neuro rights. Find out how they could be protected.

—Jessica Hamzelou

We can still have nice things

A place for comfort, fun and distraction to brighten up your day. (Got any ideas? Drop me a line.)

- Looking for a good view? Earth’s longest line of sight has been empirically proven.

+ A biblical endorsement of sin is a welcome reminder that we all make typos.

+ Richard Nadler’s illustrations of vertical societies are exquisitely detailed.

+ This 1978 BBC film evocatively exposes our tendency to stress over tech-dependency.

BROOMFIELD, Colorado—One of NASA's oldest astronomy missions, the Neil Gehrels Swift Observatory, has been out of action for more than a month as scientists await the arrival of a pioneering robotic rescue mission.

The 21-year-old spacecraft is falling out of orbit, and NASA officials believe it's worth saving—for the right price. Swift is not a flagship astronomy mission like Hubble or Webb, so there's no talk of sending astronauts or spending hundreds of millions of dollars on a rescue expedition. Hubble was upgraded by five space shuttle missions, and billionaire and commercial astronaut Jared Isaacman—now NASA's administrator—proposed a privately funded mission to service Hubble in 2022, but the agency rejected the idea.

Swift may be a more suitable target for a first-of-a-kind commercial rescue mission. It has cost roughly $500 million (adjusted for inflation) to build, launch, and operate, but it is significantly less expensive than Hubble, so the consequences of a botched rescue would be far less severe. Last September, NASA awarded a company named Katalyst Space Technologies a $30 million contract to rapidly build and launch a commercial satellite to stabilize Swift's orbit and extend its mission.

A "phone" company is now competing with Anthropic on AI benchmarks. Xiaomi's MiMo-V2-Pro ranks #3 globally on agent tasks.

Xiaomi, yes the "phone" company, has two AI models that are turning heads. Pro (1T params) ranks right behind Claude Opus 4.6 on agent benchmarks at 1/8th the price. Flash (309B, open source) beats every other open source model on SWE-Bench at $0.10 per million tokens.

The lead researcher came from DeepSeek. The Pro model spent a week on OpenRouter under the codename "Hunter Alpha" with no attribution. Developers tested it, praised it, and the entire community assumed it was DeepSeek V4. Then Xiaomi revealed it was theirs.

Some numbers that put this in perspective:

- MiMo-V2-Pro: 1T total params, 42B active, 1M context window, $1/$3 per million tokens

- MiMo-V2-Flash: 309B total, 15B active, 150 tok/s, $0.10/$0.30, fully open source on HuggingFace

- Claude Opus 4.6: $5/$25 per million tokens for comparable agent performance

- Flash scores 73.4% on SWE-Bench. Claude Sonnet scores 72.8% at 30x the price.

They also released MiMo-V2-Omni (multimodal, processes text/image/video/10+ hours of audio) and MiMo-V2-TTS (expressive speech). The full family is designed as an integrated agent stack: Pro thinks, Omni perceives, TTS speaks.

A year ago Xiaomi was known for phones and rice cookers. Now they have a four model AI family that competes with frontier labs. The Chinese AI race is getting wild.

Full comparison of Pro vs Flash: https://www.aimadetools.com/blog/mimo-v2-pro-vs-mimo-v2-flash/

In early February, animal welfare advocates and AI researchers gathered in stocking feet at Mox, a scrappy, shoes-free coworking space in San Francisco. Yellow and red canopies billowed overhead, Persian rugs blanketed the floor, and mosaic lamps glowed beside potted plants.

In the common area, a wildlife advocate spoke passionately to a crowd lounging in beanbags about a form of rodent birth control that could manage rat populations without poison. In the “Crustacean Room,” a dozen people sat in a circle, debating whether the sentience of insects could tell us anything about the inner lives of chatbots. In front of the “Bovine Room” stood a bookshelf stacked with copies of Eliezer Yudkowsky’s If Anyone Builds It, Everyone Dies, a manifesto arguing that AI could wipe out humanity.

The event was hosted by Sentient Futures, an organization that believes the future of animal welfare will depend on AI. Like many Bay Area denizens, the attendees were decidedly “AGI-pilled”—they believe that artificial general intelligence, powerful AI that can compete with humans on most cognitive tasks, is on the horizon. If that’s true, they reason, then AI will likely prove key to solving society’s thorniest problems—including animal suffering.

To be clear, experts still fiercely debate whether today’s AI systems will ever achieve human- or superhuman-level intelligence, and it’s not clear what will happen if they do. But some conference attendees envision a possible future in which it is AI systems, and not humans, who call the shots. Eventually, they think, the welfare of animals could hinge on whether we’ve trained AI systems to value animal lives.

“AI is going to be very transformative, and it’s going to pretty much flip the game board,” said Constance Li, founder of Sentient Futures. “If you think that AI will make the majority of decisions, then it matters how they value animals and other sentient beings”—those that can feel and, therefore, suffer.

Like Li, many summit attendees have been committed to animal welfare since long before AI came into the picture. But they’re not the types to donate a hundred bucks to an animal shelter. Instead of focusing on local actions, they prioritize larger-scale solutions, such as reducing factory farming by promoting cultivated meat, which is grown in a lab from animal cells.

The Bay Area animal welfare movement is closely linked to effective altruism, a philanthropic movement committed to maximizing the amount of good one does in the world—indeed, many conference attendees work for organizations funded by effective altruists. That philosophy might sound great on paper, but “maximizing good” is a tricky puzzle that might not admit a clear solution. The movement has been widely criticized for some of its conclusions, such as promoting working in exploitative industries to maximize charitable donations and ignoring present-day harms in favor of issues that could cause suffering for a large number of people who haven’t been born yet. Critics also argue that effective altruists neglect the importance of systemic issues such as racism and economic exploitation and overlook the insights that marginalized communities might have into the best ways to improve their own lives.

When it comes to animal welfare, this exactingly utilitarian approach can lead to some strange conclusions. For example, some effective altruists say it makes sense to commit significant resources to improving the welfare of insects and shrimp because they exist in such staggering numbers, even though they may not have much individual capacity for suffering.

Now the movement is sorting out how AI fits in. At the summit, Jasmine Brazilek, cofounder of a nonprofit called Compassion in Machine Learning, opened her sticker-stamped laptop to pull up a benchmark she devised to measure how LLMs reason about animal welfare. A cloud security engineer turned animal advocate, she’d flown in from La Paz, Mexico, where she runs her nonprofit with a handful of volunteers and a shoestring budget.

Brazilek urged the AI researchers in the room to train their models with synthetic documents that reflect concern for animal welfare. “Hopefully, future superintelligent systems consider nonhuman interest, and there is a world where AI amplifies the best of human values and not the worst,” she said.

The power of the purse

The technologically inclined side of the animal welfare movement has faced some major setbacks in recent years. Dreams of transitioning people away from a diet dependent on factory farming have been dampened by developments such as the decimation of the plant-based-meat company Beyond Meat’s stock price and the passage of laws banning cultivated meat in several US states.

AI has injected a shot of optimism. Like much of Silicon Valley, many attendees at the summit subscribe to the idea that AI might dramatically increase their productivity—though their goal is not to maximize their seed round but, rather, to prevent as much animal suffering as possible. Some brainstormed how to use Claude Code and custom agents to handle the coding and administrative tasks in their advocacy work. Others pitched the idea of developing new, cheaper methods for cultivating meat using scientific AI tools such as AlphaFold, which aids in molecular biology research by predicting the three-dimensional structures of proteins.

But the real talk of the event was a flood of funding that advocates expect will soon be committed to animal welfare charities—not by individual megadonors, but by AI lab employees.

Much of the funding for the farm animal welfare movement, which includes nonprofits advocating for improved conditions on farms, promoting veganism, and endorsing cultivated meat, comes from people in the tech industry, says Lewis Bollard, the managing director of the farm animal welfare fund at Coefficient Giving, a philanthropic funder that used to be called Open Philanthropy. Coefficient Giving is backed by Facebook cofounder Dustin Moskovitz and his wife, Cari Tuna, who are among a handful of Silicon Valley billionaires who embrace effective altruism

“This has just been an area that was completely neglected by traditional philanthropies,” such as the Gates Foundation and the Ford Foundation, Bollard says. “It’s primarily been people in tech who have been open to [it].”

The next generation of big donors, Bollard expects, will be AI researchers—particularly those who work at Anthropic, the AI lab behind the chatbot Claude. Anthropic’s founding team also has connections to the effective altruism movement, and the company has a generous donation matching program. In February, Anthropic’s valuation reached $380 billion and it gave employees the option to cash in on their equity, so some of that money could soon be flowing into charitable coffers.

The prospect of new funding sustained a constant buzz of conversation at the summit. Animal welfare advocates huddled in the “Arthropod Room” and scrawled big dollar figures and catchy acronyms for projects on a whiteboard. One person pitched a $100 million animal super PAC that would place staffers with Congress members and lobby for animal welfare legislation. Some wanted to start a media company that creates AI-generated content on TikTok promoting veganism. Others spoke about placing animal advocates inside AI labs.

“The amount of new funding does give us more confidence to be bolder about things,” said Aaron Boddy, cofounder of the Shrimp Welfare Project, an organization that aims to reduce the suffering of farmed shrimp through humane slaughter, among other initiatives.

The question of AI welfare

But animal welfare was only half the focus of the Sentient Futures summit. Some attendees probed far headier territory. They took seriously the controversial idea that AI systems might one day develop the capacity to feel and therefore suffer, and they worry that this future AI suffering, if ignored, could constitute a moral catastrophe.

AI suffering is a tricky research problem, not least because scientists don’t yet have a solid grip on why humans and other animals are sentient. But at the summit, a niche cadre of philosophers, largely funded by the effective altruism movement, and a handful of freewheeling academics grappled with the question. Some presented their research on using LLMs to evaluate whether other LLMs might be sentient. On Debate Night, attendees argued about whether we should ironically call sentient AI systems “clankers,” a derogatory term for robots from the film Star Wars, asking if the robot slur could shape how we treat a new kind of mind.

“It doesn’t matter if it’s a cow or a pig or an AI, as long as they have the capacity to feel happiness or suffering,” says Li.

In some ways, bringing AI sentience into an animal welfare conference isn’t as strange a move as it might seem. Researchers who work on machine sentience often draw on theories and approaches pioneered in the study of animal sentience, and if you accept that invertebrates likely feel pain and believe that AI systems might soon achieve superhuman intelligence, entertaining the possibility that those systems might also suffer may not be much of a leap.

“Animal welfare advocates are used to going against the grain,” says Derek Shiller, an AI consciousness researcher at the think tank Rethink Priorities, who was once a web developer at the animal advocacy nonprofit Humane League. “They’re more open to being concerned about AI welfare, even though other people think it’s silly.”

But outside the niche Bay Area circle, caring about the possibility of AI sentience is a harder sell. Li says she faced pushback from other animal welfare advocates when, inspired by a conference on AI sentience she attended in 2023, she rebranded her farm animal welfare advocacy organization as Sentient Futures last year. “Many people were extremely confident that AIs would never become sentient and [argued that] by investing any energy or money into AI welfare, we’re just burning money and throwing it away,” she says.

Matt Dominguez, executive director of Compassion in World Farming, echoed the concern. “I would hate to see people pulling money out of farm animal welfare or animal welfare and moving it into something that is hypothetical at this particular moment,” he says.

Still, Dominguez, who started partnering with the Shrimp Welfare Project after learning about invertebrate suffering, believes compassion is expansive. “When we get someone to care about one of those things, it creates capacity for their circle of compassion to grow to include others,” he says.

A new bill proposed in California "goes after big tech companies" writes Semafor. Supported by Y Combinator, Cory Doctorow , and the nonprofit advocacy group Fight for the Future, it's called the "BASED" act - an acronym which stands for "Blocking Anticompetitive Self-preferencing by Entrenched Dominant platforms."

As announced by San Francisco state representative Scott Wiener, the bill "will restore competition to the digital marketplace by prohibiting any digital platform with a market capitalization greater than $1 trillion and serving 100 million or more monthly users in the U.S., from favoring their own products and services on the platforms they operate."

More from Scott Wiener;s announcement:

For years, giant digital platforms like Apple, Amazon, Google, and Meta have used their immense power to promote their own products and services while stifling competitors - a practice also known as self-preferencing. The result has been higher prices, diminished service, and fewer options for consumers, and less innovation across the technology ecosystem. Self-preferencing also locks startups and mid-sized companies out of the online marketplace unless they play by rules set by their competitors. As a new generation of AI-powered startups seeks to enter the marketplace, their success - and public access to the innovations they produce - depends on their ability to compete on an even playing field.

"Anticompetitive behavior is everywhere on the internet," said Senator Wiener, "from rigged search results, to manipulative nudges boosting the 'house' product, to anti-discount policies that raise prices, to the dreaded green bubble that 'breaks' the group chat. When the world's largest digital platforms rig the game to favor their own products and services, we all lose. By prohibiting these anticompetitive practices, the BASED Act will protect competition online, empower consumers and startups, and promote innovations to improve all our lives."

The announcement includes a quote from Teri Olle, VP of the nonprofit Economic Security California Action, saying the act would "safeguard merit-based market competition. This legislation stands for a simple principle: owning the stadium doesn't mean that you get to rig the game." Some conduct prohibited by the proposed bill includes Manipulating the order of search results to favor a provider's products or services, irrespective of a merit-based process, Using non-public data generated by third-party sellers - including sales volumes, pricing, and customer behavior - to develop competing products that are subsequently boosted above the third-party sellers' product...

And the announcement also notes that "under the terms of the bill, providers could not prevent consumers from obtaining a portable copy of their own data or restrict voluntary data sharing (by consumers) with third parties."

Read on for reactions from DuckDuckGo, Proton, Yelp, Y Combinator, and Cory Doctorow.

CNBC reports:

Amazon has acquired Rivr, a Swiss robotics company developing machines for "doorstep delivery," the company confirmed Thursday... It announced the deal in a notice sent to third-party delivery contractors... "We believe this technology, when working alongside your [delivery associates], has the potential to further improve safety outcomes and the overall customer experience, particularly in the last steps of the delivery process...." In its notice to delivery service partner owners, Amazon said Rivr's technology, which includes a four-legged robot on wheels, will allow it to research and test how the devices can be integrated into delivery operations, including "helping [delivery associates] carry packages from delivery vehicles to customer doorsteps."

EFF Tells Publishers: Blocking the Internet Archive Won't Stop AI, But It Will Erase The Historical Record

"Imagine a newspaper publisher announcing it will no longer allow libraries to keep copies of its paper," writes EFF senior policy analyst Joe Mullin.

"That's effectively what's begun happening online in the last few months."

The Internet Archive - the world's largest digital library - has preserved newspapers since it went online in the mid-1990s... But in recent months The New York Times began blocking the Archive from crawling its website, using technical measures that go beyond the web's traditional robots.txt rules. That risks cutting off a record that historians and journalists have relied on for decades. Other newspapers, including The Guardian, seem to be following suit...

The Times says the move is driven by concerns about AI companies scraping news content. Publishers seek control over how their work is used, and several - including the Times - are now suing AI companies over whether training models on copyrighted material violates the law. There's a strong case that such training is fair use. Whatever the outcome of those lawsuits, blocking nonprofit archivists is the wrong response.

Organizations like the Internet Archive are not building commercial AI systems. They are preserving a record of our history. Turning off that preservation in an effort to control AI access could essentially torch decades of historical documentation over a fight that libraries like the Archive didn't start, and didn't ask for. If publishers shut the Archive out, they aren't just limiting bots. They're erasing the historical record...

Even if courts place limits on AI training, the law protecting search and web archiving is already well established... There are real disputes over AI training that must be resolved in courts. But sacrificing the public record to fight those battles would be a profound, and possibly irreversible, mistake.

"Juicier steaks could soon be served up after barley was given the go-ahead to become Britain's first gene-edited crop," reports the Telegraph: In an effort to fatten up cows and get them to market faster, scientists have altered the DNA of Golden Promise barley to increase its fat content... [Regulators have approved the feeding of that barley to cows for further studies.] [T]he small increase reduces the time it takes for farmers to raise animals for slaughter and increases the amount of milk and meat they produce to make the industry more profitable.

The gene-edited barley is also able to cut the amount of methane a cow produces, [Rothamsted Research professor/biochemist Peter] Eastmond said... Reducing methane from cattle is a major goal of the industry, and Professor Eastmond estimated his barley could cut the methane output from a single cow by up to 15%.

The two genetic tweaks to the barley are believed to alter the gut bacteria in cows' stomachs and reduce the amount of methane-generating microbes, cutting the cows' emissions.... [Eastmond] is also working on applying the same two gene edits to rye grass to create pastures and meadows which are lipid-rich and calorie-dense. This, he said, could lead to entire fields of gene-edited grass which could be grazed by cows, sheep, horses and goats to fatten them up and cut emissions... "It would be better to have this technology in a pasture grass that's grown to supply the livestock and graze it directly." The barley "has been modified to have a single letter of DNA removed from two different genes to switch them off," the article points out. "No genes have been added to its DNA and it is not considered to be genetically modified."

The article points out that Britain "has launched a push towards more gene-edited crops as a key post-Brexit freedom since splitting from the European Union," noting that U.K. scientists and private companies "have created products such as bread with fewer cancer-causing chemicals, longer-lasting strawberries and bananas, sweeter-tasting lettuce and disease-resistant potatoes, although these are yet to be granted permission to land on supermarket shelves..." But the EU has so far resisted the sale of any gene-edited crops in the EU.

Thanks to long-time Slashdot reader fjo3 for sharing the article.

China's orbital outpost Tiangong was completed in 2022 and is hosting up to three astronauts at a time, reports CNN.

But meanwhile U.S. lawmakers are now signaling there's not time to develop and launch a replacement for the International Space Station - considered the signal most expensive object ever built - before its deorbiting in 2030. A recent Senate bill calls for the U.S. to continue funding it as late as 2032, but that bill still awaits approval from the U.S. Senate and the House.

But some private space companies are already building their alternatives:

Private companies that are in the early design and mockup phase of developing these space stations are still waiting on NASA for guidance - and money... [NASA's "Requests for Proposals"] were delayed, in part because it took all of 2025 to cinch a confirmation for Trump's on-again-off-again pick for NASA administrator, Jared Isaacman [confirmed in December]... Similarly, 2025 saw a 45-day government shutdown, the longest in history - adding another hiccup in the space agency's plans to begin formally soliciting proposals from the private sector. Companies now expect that NASA will issue its Request for Proposals in late March or early April, one CEO told CNN...

Several commercial outfits have recently announced big funding influxes aimed at speeding up the development and launch of new orbiting outposts. Houston-based Axiom Space announced a $350 million funding round last month. Its California-based competitor Vast then notched a $500 million raise in early March. Vast is determined to launch a bare-bones station to orbit as soon as possible, with or without federal input, according to the company. "Our approach is to actually not wait for (NASA) and get going and build a minimum viable product, single-module space station called Haven-1, which we're launching into orbit next year," Vast CEO Max Haot told CNN in a phone interview earlier this month. Similarly, Axiom Space is working toward a 2028 launch date for a module that it plans to initially attach to the ISS before breaking off to orbit on its own. A spokesperson told CNN that it the company is "committed" to winning the NASA contract money and may continue pursing such goals even without contract awards.

Still, there's lingering doubt that any of the companies pursuing space stations will be able to stay afloat without securing a coveted NASA contract or at least cinching significant business from the public sector.

The article includes "Another complicating fact: Russia, the United States' primary partner on the ISS, has not pledged to keep operating its half of the space station past 2028." NASA will eventually evaluate proposals for an ISS alternative from Vast, Axiom Space, Jeff Bezos' Blue Origin, Max Space and several competitors including Voyager Technologies, CNN notes, ultimately handing out an estimated $1.5 billion in contracts between 2026 and 2031.

And while those companies may wait decades before a return on their investment, the article includes this quotes from the cofounder/general partner of Balerion Space Ventures, which led the fundraising for Vast. " What's obvious to us is you're going to have multiple vehicles with myriad companies go into space. You're going to have vehicles leaving from celestial bodies, like the moon. And we need a habitat."

ReactOS aims to be compatible with programs and drivers developed for Windows Server 2003 and later versions of Microsoft Windows.

And Slashdot reader jeditobe reports that the project has now "announced significant progress in achieving compatibility with proprietary graphics drivers."

ReactOS now supports roughly 90% of GPU drivers for Windows XP and Windows Server 2003, thanks to a series of fixes and the implementation of the KMDF (Kernel-Mode Driver Framework) and WDDM (Windows Display Driver Model) subsystems. Prior to these changes, many proprietary drivers either failed to launch or exhibited unstable behavior. In the latest nightly builds of the 0.4.16 branch, drivers from a variety of manufacturers - including Intel, NVIDIA, and AMD - are running reliably.

The project demonstrated ReactOS running on real hardware, including booting with installed drivers for graphics cards such as Intel GMA 945, NVIDIA GeForce 8800 GTS and GTX 750 Ti, and AMD Radeon HD 7530G. They also highlighted successful operation on mobile GPUs like the NVIDIA Quadro 1000M, with 2D/3D acceleration, audio, and network connectivity all functioning correctly. Further tests confirmed support on less common or older configurations, including a laptop with a Radeon Xpress 1100, as well as high-performance cards like the NVIDIA GTX Titan X.

A key contribution came from a patch merged into the main branch for the memory management subsystem, which improved driver stability and reduced crashes during graphics adapter initialization.

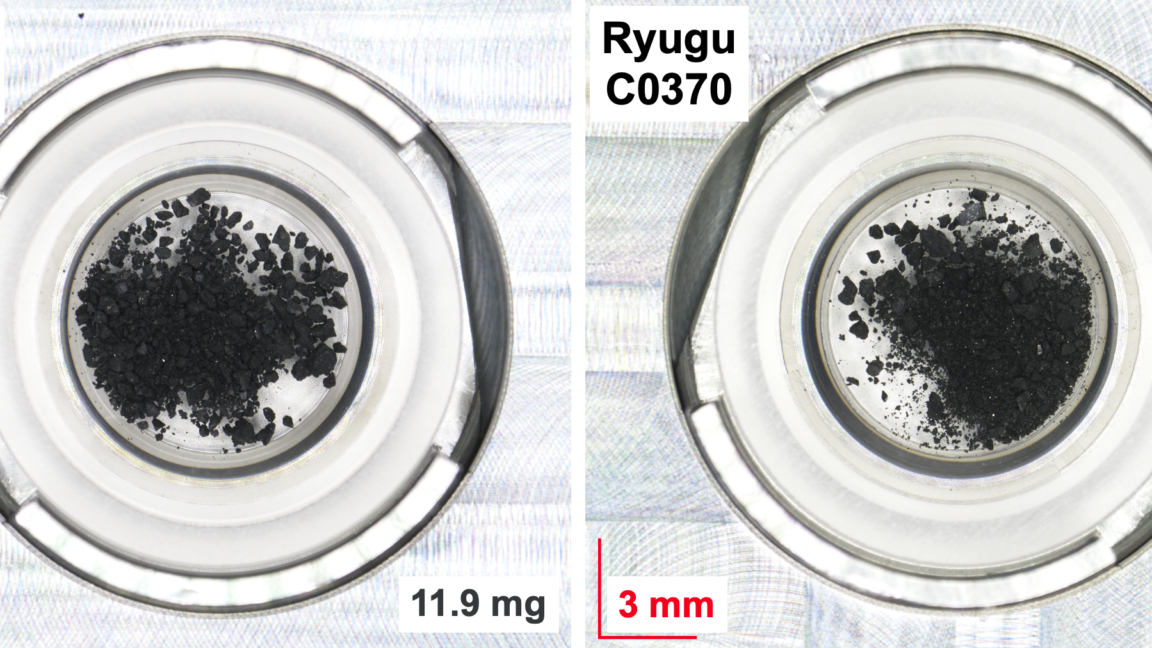

On Monday, a paper announcing that all four DNA bases had been found on an asteroid sparked a lot of headlines. But many of the headlines omitted a key word needed to put the discovery in context: "again." The paper itself cited similar results dating back to 2011, and the ensuing years have seen various confirmations and more rigorous studies. The new work was less notable for showing that we had found these bases in Ryugu than for solving a previous mystery: earlier studies had failed to detect them there, despite their presence in many other asteroid samples.

Outside the headlines, though, the new work provides some interesting details, as it may answer an important question: how these bases got there in the first place. Understanding that better may be critical for getting a better picture of how the raw materials for life ended up on Earth in the first place.

Searching for bases

Let's start with a description of what the researchers found. Both DNA and RNA, the two nucleic acids used by life, share a similar structure. That includes the backbone, a chain that alternates between sugars and phosphates that are all chemically linked together. While the specific sugar differs between DNA and RNA, the chain itself varies only in length; otherwise, the backbone of every DNA or RNA molecule is identical.

Jensen talking about the next wave of AI. Imagine an even faster rate of advancement in the field of biology compared to what we've seen with programming over the past few years.

An anonymous reader quotes a report from CNBC: The Trump administration on Friday issued (PDF) a legislative framework for a single national policy on artificial intelligence, aiming to create uniform safety and security guardrails around the nascent technology while preempting states from enacting their own AI rules. The six-pronged outline broadly proposes a slew of regulations on AI products and infrastructure, ranging from implementing new child-safety rules to standardizing the permitting and energy use of AI data centers. It also calls on Congress to address thorny issues surrounding intellectual-property rights and craft rules "preventing AI systems from being used to silence or censor lawful political expression or dissent."

The administration said in an official release that it wants to work with Congress "in the coming months" to convert its framework into a bill that President Donald Trump can sign. The White House wants to codify the framework into law "this year" and believes it can generate bipartisan support, Michael Kratsios, director of the White House Office of Science and Technology Policy, said in an interview with Fox News on Thursday evening. That won't be easy in a deeply divided Congress where Republicans hold thin and often fractious majorities, and where Trump has already urged GOP lawmakers to prioritize his controversial voter-ID bill above all else ahead of the November midterms. BCLP has an interactive map that tracks the proposed, failed and enacted AI regulatory bills from each state.

[BREAKTHROUGH] Memory Sparse Attention (MSA) allows 100M context window with minimal performance loss

Remember to click on translate if you don't know Chinese. X post

Here is a Youtube video from MattVidPro explaining it in detail with a nice Notebook LM breakdown.

And here is the Github paper.

Caveat: It scales memory really well, but not deep reasoning—great at finding info, less reliable at fully connecting complex ideas spread across many sources.

What does it means for us users?

Today:

- hard context limits → resets

Future:

- no reset, but occasional blind spots

That’s the tradeoff.

As energy prices soar from the Iran conflict, the International Energy Agency is urging governments to cut energy use by taking up measures like remote work and reduced speed limits. The group warns the energy security crisis could persist for months, even if supply routes stabilize. "I believe the world has not yet well understood the depth of the energy security challenge we are facing," said IEA's executive director, Fatih Birol. "It is much bigger than what we had in the 1970s... It is also bigger than the natural gas price shock we experienced after the Russia's invasion of Ukraine." The BBC reports: Thirty-two countries are members of the IEA, including the US, the UK, Australia, Canada, Japan and 24 other European nations. Its role is to act as a global watchdog, providing analysis and recommendations on global energy problems, such as energy security and the transition to clean energy. The IEA's other suggestions for governments, businesses and individuals include:

- Promoting use of public transport

- Giving private cars access to city centres on alternate days

- Encouraging car sharing and efficient driving habits

- Avoiding air travel where possible, especially business flights

- Switching to electric cooking

It also said there should be a focused effort to preserve liquid petroleum gas for cooking and other essential uses, by switching bio-fuel converted vehicles onto gas and introducing other measures to reduce its use. Birol said these proposals were in addition to action taken by IEA member countries earlier this month, when they agreed to release 400 million barrels of oil, 20% of its emergency reserves. Several countries in Asia have implemented emergency four-day workweeks and work-from-home mandates as they have been hit particularly hard from the conflict. Fortune notes: "Asia is particularly dependent on oil exports from the Middle East; Japan and South Korea respectively source 90% and 70% of their oil from the region."

joshuark shares a report from Neowin: OpenAI is planning to combine its Atlas web browser, ChatGPT app, and Codex coding app into a singular desktop "superapp." CEO of Applications, Fidji Simo, said the company was doubling down on its successful products. By taking this move, the AI company aims to streamline the user experience and reduce fragmentation. Simo said in an internal memo: "We realized we were spreading our efforts across too many apps and stacks, and that we need to simplify our efforts. That fragmentation has been slowing us down and making it harder to hit the quality bar we want."

An anonymous reader quotes a repot from the Portland Tribune: There was plenty of uncertainty and debate about the effectiveness of a cell phone ban decreed (PDF) by executive order last summer. But at least in Estacada, the policy has earned two thumbs up, including approval from a "grumpy old teacher." Jeff Mellema is a language arts teacher at Estacada High School. He has worked in the building for 24 years, and he said the new policy that prohibits students from using their phones during the day has been a breath of fresh air.

"There is so much better discourse in my classroom, be it personal or academic," Mellema said. "Students can't avoid those conversations anymore with their phones." "This ban has brought joy back to this old, grumpy teacher," he added with a smile. That is the kind of feedback Gov. Tina Kotek was hoping for as she visited Estacada High School on Wednesday afternoon, March 18. Her goal was to visit classrooms, speak with administrators, and meet with students one-on-one to hear about the effectiveness of her phone policy. [...] In the classrooms, she was able to take a straw poll around the cell phone ban and then get specific, direct feedback from the kids. Overall, it was positive.

The Rangers said they noticed changes in how they interact with teachers and peers. They don't feel that "siren's song" tug of their phones as often, and the changes are bleeding into everyday life as well -- think less reminders to put phones away during family dinners. Phones also led to issues around bullying and online toxicity during the school day. There are some hiccups. The students spoke about difficulties in tracking busy schedules. Many athletes relied on their phones for practice times and locations. Some advanced placement kids said the overzealous programs monitoring school laptops blocked access to needed resources for studying/researching schoolwork. There is even a strange quirk with school-provided tech that prevents them from accessing their calculators. "Maybe the filters are too strong right now," Gov. Kotek said. "That is why we are working with the districts to best implement the policy."

The kids also weighed in on the debate around the extent of the ban. The two options bandied in Salem were a "bell-to-bell" policy or just inside classrooms. The latter would allow kids to use their phones during passing period and lunch. Several advocated for that change. That mirrored the debate within the Oregon legislature. It ultimately led to a stalemate and the need for Gov. Kotek's executive ruling. "When you make a decision like this, you don't know how it will ultimately work," Kotek told the students. "I appreciate you adapting to the situation and making it work for you." While things could change in the future, the governor is pleased with the early results. The phone ban is here to stay.

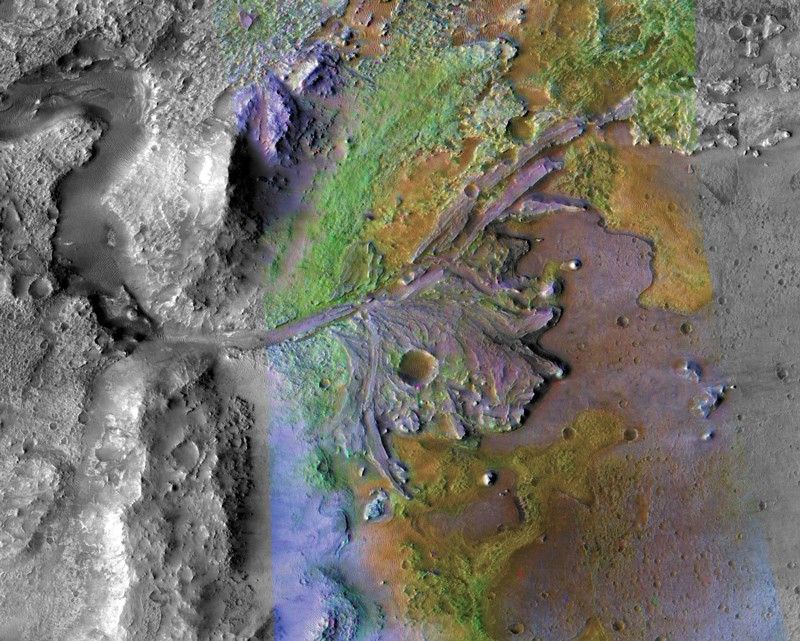

When NASA’s Perseverance rover landed in Jezero Crater in 2021, its primary mission was to scour the remnants of a dried-up Martian lakebed for signs of ancient life. Scientists have been focused on the crater's spectacular Western Delta, a fan-shaped geologic feature deposited by a river flowing into the basin billions of years ago. But now Perseverance’s ground-penetrating radar (called RIMFAX) detected what is likely another, even older river delta buried tens of meters beneath it.

“I think it’s a promising place to look for signs of biosignatures at depth,” says Emily L. Cardarelli. “Microbial life could have potentially developed in those types of environments.” Cardarelli, an astrobiologist at the University of California Los Angeles, led the team interpreting RIMFAX imagery.

Peeking underground

Perseverance’s RIMFAX, the Radar Imager for Mars Subsurface Experiment, continuously fires radar waves into the ground, acquiring soundings each time the rover traveled 10 centimeters. When these radio waves hit boundaries between different types of rock, ice, or sediment layers, some of the signal bounces back. The timing and intensity of these reflections allow scientists to construct a two-dimensional, vertical slice of the subsurface, much like a sonogram of the Martian crust.

For more than 60 years, nearly every large rocket used some combination of the same liquid and solid propellants. Refined kerosene was favored for its easy handling and non-toxicity, hydrazine for its storability and simplicity, hydrogen for its efficiency, and solid fuels for their long shelf life and rapid launch capability.

About 15 years ago, rocket companies started serious development of large methane-fueled engines. SpaceX and Blue Origin now build the most powerful of these new engines—the Raptor and BE-4—each capable of generating more than half a million pounds of thrust. SpaceX's Starship rocket and its enormous booster are powered by 39 Raptors, while Blue Origin's New Glenn and United Launch Alliance's Vulcan rockets use a smaller number of BE-4s on their booster stages.

Burning methane in combination with liquid oxygen, these "methalox" engines have several advantages. Methane is better suited for reusable engines because they leave behind less sooty residue than kerosene, which SpaceX uses on the Falcon 9 rocket. Methane is easier to handle than liquid hydrogen, which is prone to leaks and must be stored at staggeringly cold temperatures of around minus 423 degrees Fahrenheit (minus 253 degrees Celsius). Methane is also a cryogenic liquid, but it has a warmer temperature closer to that of liquid oxygen, between minus 260 and minus 297 degrees Fahrenheit (minus 162 to minus 183 degrees Celsius).

The Download: OpenAI is building a fully automated researcher, and a psychedelic trial blind spot

This is today’s edition of The Download, our weekday newsletter that provides a daily dose of what’s going on in the world of technology.

OpenAI is throwing everything into building a fully automated researcher

OpenAI has a new grand challenge: building an AI researcher—a fully automated agent-based system capable of tackling large, complex problems by itself. The San Francisco firm said the new goal will be its “north star” for the next few years.

By September, the company plans to build “an autonomous AI research intern” that can take on a small number of specific research problems. The intern will be the precursor to the fully automated multi-agent system, which is slated to debut in 2028.

In an exclusive interview this week, OpenAI’s chief scientist, Jakub Pachocki, talked me through the plans. Find out what I discovered.

—Will Douglas Heaven

Mind-altering substances are (still) falling short in clinical trials

Over the last decade, we’ve seen scientific interest in psychedelic drugs explode. Compounds like psilocybin—which is found in magic mushrooms—are being explored for all sorts of health applications, including treatments for depression, PTSD, addiction, and even obesity. But two studies out earlier this week demonstrate just how difficult it is to study these drugs.

For me, they show just how overhyped these substances have become. Find out why here.

—Jessica Hamzelou

This story first appeared in The Checkup, MIT Technology Review’s weekly biotech newsletter. Sign up to receive it in your inbox every Wednesday.

Read more: What do psychedelic drugs do to our brains? AI could help us find out

The must-reads

I’ve combed the internet to find you today’s most fun/important/scary/fascinating stories about technology.

1 OpenAI is building a “super app”

It’s merging ChatGPT, a web browser, and a coding tool into a single app. (The Verge)

+ It’s also buying coding startup Astral to enhance its Codex model. (Ars Technica)

+ The moves come amid a cutback on side projects. (WSJ $)

+ OpenAI has lost ground to Anthropic in the enterprise market. (Axios)

2 The US has charged Super Micro’s co-founder with smuggling AI tech to China

Super Micro is third on Fortune’s list of the fastest-growing companies. (Reuters)

+ GenAI is learning to spy for the US military. (MIT Technology Review)

+ The compute competition is shaping the China-US rivalry. (Politico)

3 The DoJ has taken down botnets behind the largest-ever DDoS attack

They had infected more than 3 million devices. (Wired $)

+ The DoJ has also seized domains tied to Iranian “hacktivists.” (Axios)

4 The Pentagon says Anthropic’s foreign workers are a security risk

It cited Chinese employees as a particular concern. (Axios)

+ Anthropic’s moral boundaries have incensed the DoD. (MIT Technology Review)

5 High oil prices could wreck the AI boom, the WTO has warned

Fears are growing of a prolonged energy shock. (The Guardian)

+ We did the math on AI’s energy footprint. (MIT Technology Review)

6 Jeff Bezos is trying to raise $100 billion to use AI in manufacturing

The funds would buy manufacturing firms and infuse them with AI. (WSJ $)

+ Here’s how to fine-tune AI for prosperity. (MIT Technology Review)

7 Signal’s creator is helping to encrypt Meta’s AI

Moxie Marlinspike is integrating his encrypted chatbot, Confer. (Wired $)

+ Meta is also ditching human moderators for AI again. (CNBC)

+ AI is making online crimes easier. (MIT Technology Review)

8 Prediction market Kalshi has raised $1 billion at a $22 billion valuation

That’s double its valuation from December. (Bloomberg $)

+ Arizona’s AG has charged the company with “illegal gambling.” (NPR)

9 Meta isn’t killing Horizon Worlds for VR after all

It’s canceled plans to dump the metaverse app (for now). (CNBC)

10 A US startup is recruiting an “AI bully”

The successful candidate must test the patience of leading chatbots. (The Guardian)

Quote of the day

“Imagine a sports bar… but just for situation monitoring — live X feeds, flight radar, Bloomberg terminals, and Polymarket screens.”

—Kalshi rival Polymarket unveils its hellish vision for a new bar.

One More Thing

How gamification took over the world

It’s a thought that occurs to every video-game player at some point: what if the weird, hyper-focused state I enter in virtual worlds could somehow be applied to the real one?

For a handful of consultants, startup gurus, and game designers in the late 2000s, this state of “blissful productivity” became the key to unlocking our true human potential. Their vision became the global phenomenon of gamification—but it didn’t live up to the hype.

Instead of liberating us, gamification became a tool for coercion, distraction, and control. Find out why we fell for it—and how we can recover.

—Bryan Gardiner

We can still have nice things

A place for comfort, fun and distraction to brighten up your day. (Got any ideas? Drop me a line.)

+ In a landmark legal win for trolling, Afroman has won his diss track case against the police.

+ This LEGO artist remixes standard sets into completely different iconic objects.

+ Ease your search for aliens with these interactive estimates of advanced civilizations.

+ A rare superbloom in Death Valley has been caught on camera.

The following is a joint announcement from the MIT School of Architecture and Planning, MIT Schwarzman College of Computing, Hasso Plattner Institute, and Hasso Plattner Foundation.

The MIT Morningside Academy for Design (MAD), MIT Schwarzman College of Computing, Hasso Plattner Institute (HPI), and Hasso Plattner Foundation celebrated the launch of the MIT and HPI AI and Creativity Hub (MHACH) at a signing ceremony this week. This 10-year initiative aims to deepen ties between computing and design as advances in artificial intelligence are reshaping how ideas are conceived and shared.

Funded by the Hasso Plattner Foundation, MIT and HPI will work together to foster collaborative interdisciplinary research and support a portfolio of educational programs, fellowships, and faculty engagement focused on AI and creativity, expanding scholarly inquiry into AI applications across disciplines, industries, and societal challenges. The collaboration begins with an inaugural two-day workshop March 19-20 at MIT, bringing together faculty, students, and researchers to set early priorities.

“As we hear from our faculty, as the Information Age gives way to an era of imagination, we expect a new emphasis on human creativity,” reflects MIT President Sally Kornbluth. “Through this collaboration, MIT and HPI are creating a shared space where students and faculty will come together across disciplines to explore new ideas, experiment with emerging tools, and invent new frontiers at the intersection of human creativity and AI.”